Building and maintaining distributed systems is challenging due to complex intricacies of production environments, configuration differences, data and traffic scaling, dependencies on third-party services, and unpredictable usage patterns. These factors can lead to outages, security breaches, performance degradation, data inconsistencies, and other operational issues that may negatively impact customers [See Architecture Patterns and Well-Architected Framework]. These risks can be mitigated with phased rollouts with canary releases, leveraging feature flags for controlled feature activation, and ensuring comprehensive observability through monitoring, logging, and tracing are crucial. Additionally, rigorous scalability testing, including load and chaos testing, and proactive security testing are necessary to identify and address potential vulnerabilities. The use of blue/green deployments and the ability to quickly roll back changes further enhance the resilience of your system. Beyond these strategies, fostering a DevOps culture that emphasizes collaboration between development, operations, and security teams is vital. The following checklist serves as a guide to verify critical areas that may go awry when deploying code to production, helping teams navigate the inherent challenges of distributed systems.

Build Pipelines

- Separate Pipelines: Create distinct CI/CD pipelines for each microservice, including infrastructure changes managed through IaC (Infrastructure as Code). Also, set up a separate pipeline for config changes such as throttling limits or access policies.

- Securing and Managing Dependencies: Identify and address deprecated and vulnerable dependencies during the build process and ensure third party dependencies are vetted and hosted internally.

- Build Failures: Verify build pipelines with comprehensive suite of unit and integration tests, and promptly resolve any flaky tests caused by concurrency, networking, or other issues.

- Automatic Rollback: Automatically roll back changes if sanity tests or alarm metrics fail during the build process.

- Phased Deployments: Deploy new changes in phases gradually across multiple data centers using canary testing with adequate baking period to validate functional and non-functional behavior. Immediately roll back and halt further deployments if error rates exceed acceptable thresholds [See Mitigate Production Risks with Phased Deployment].

- Avoid Risky Deployments: Deploy changes during regular office hours to ensure any issues can be promptly addressed. Avoid deploying code during outages, availability issues, when 20%+ hosts are unhealthy, or during special calendar days like holidays or peak traffic periods.

Code Analysis and Verification

API Testing and Analysis

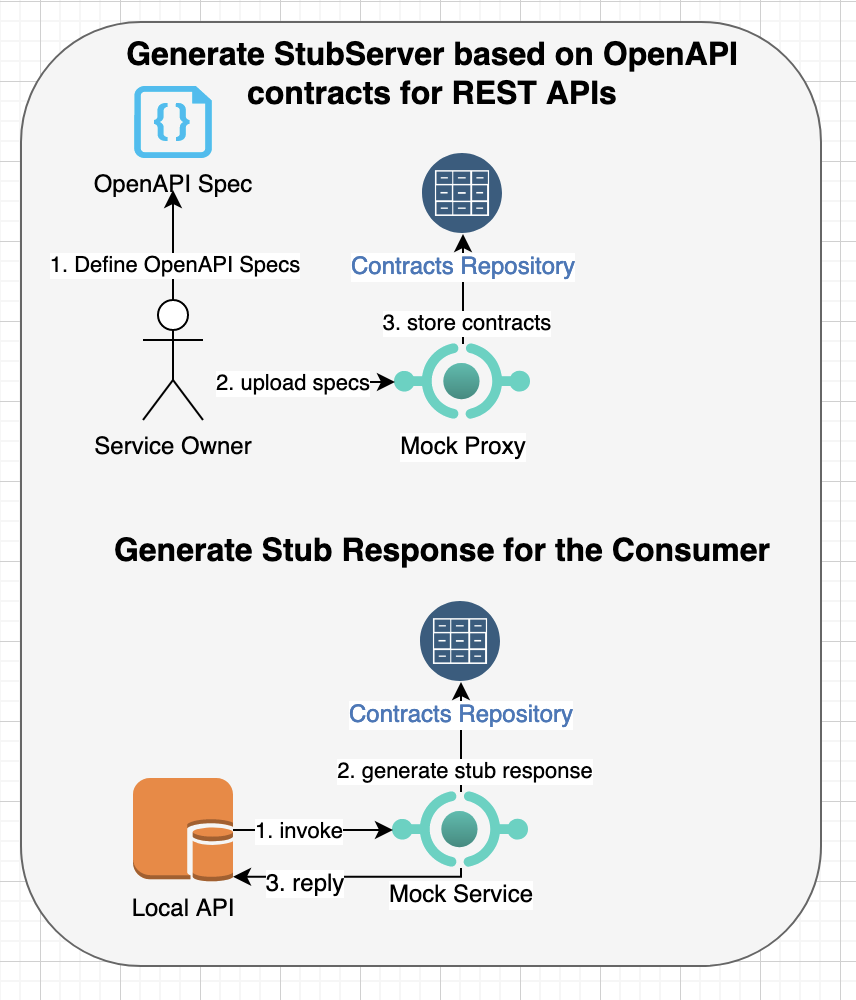

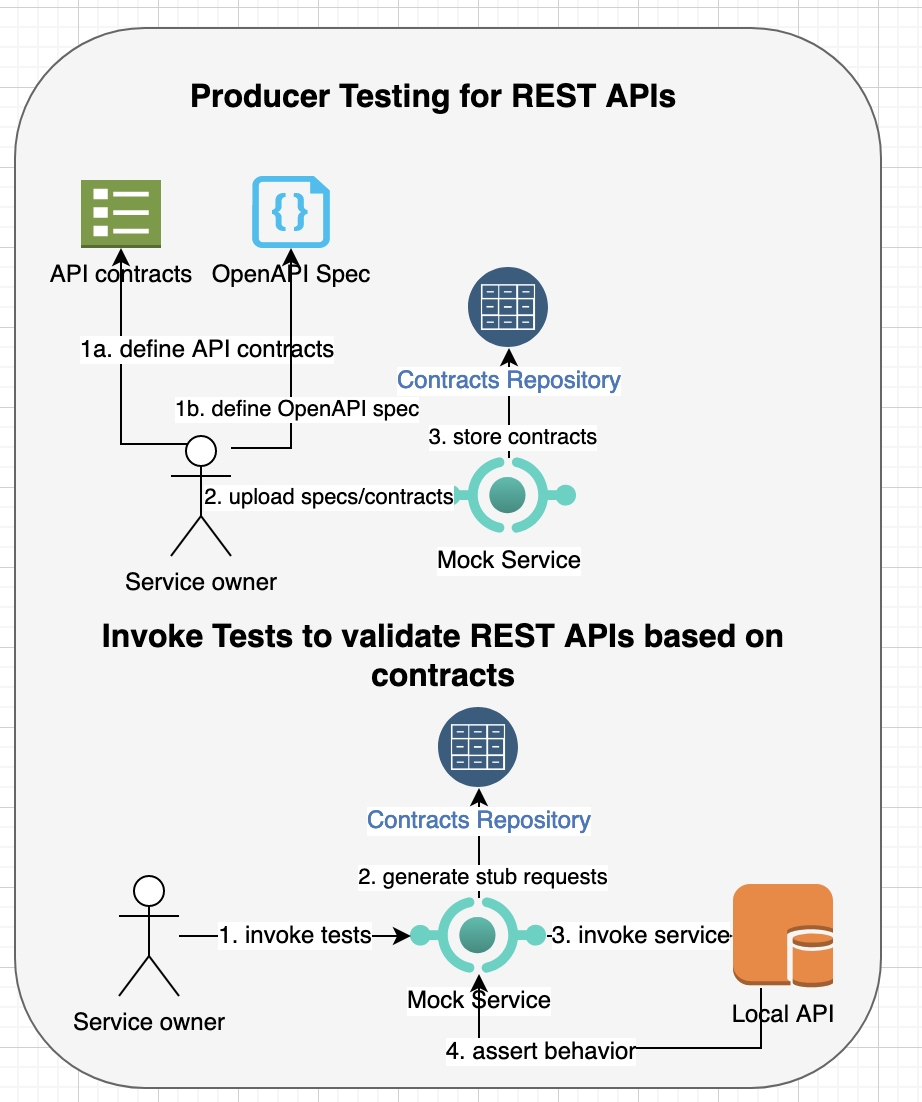

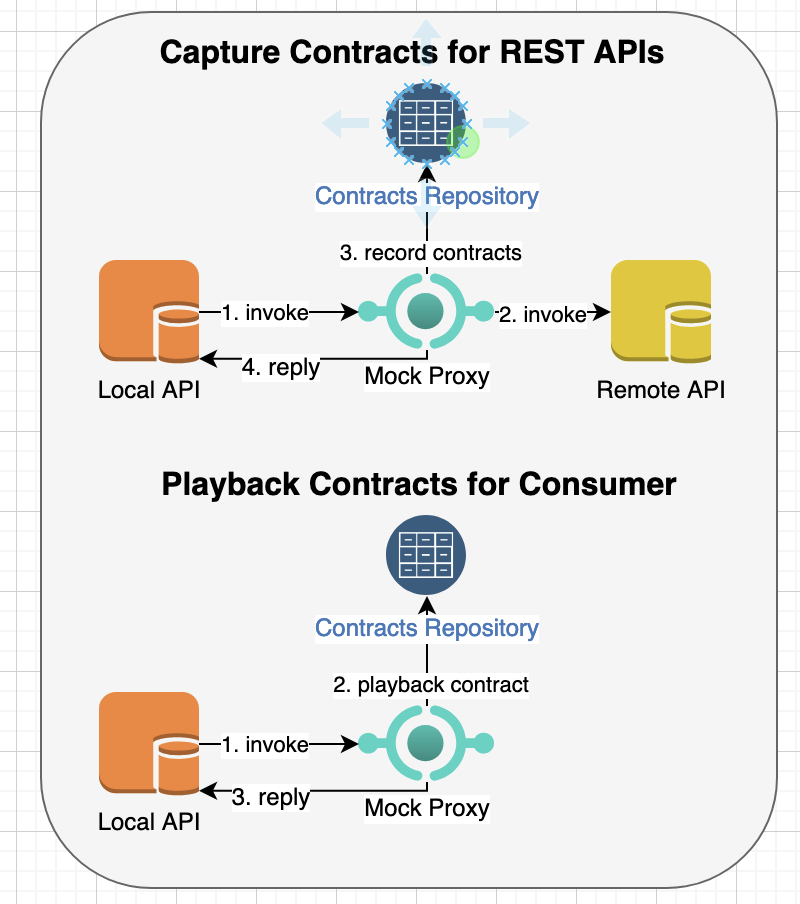

- Contract and Fuzz Testing: Leverage contract testing and fuzz testing to validate API changes [See Contract Testing for REST APIs and Property-based and Generative Testing]

- Test Coverage: Ensure code coverage for unit-tests, integration tests and E2E tests is at least 90%.

- Static Analysis Tools: Use static analysis tools like ESLint, FindBugs, SonarQube, Checkmarx, Coverity and Veracode to detect code smells and identify bugs.

Security Testing

Recommended practices for security testing [See Security Challenges in Microservice Architecture]:

- IAM Best Practices: Follow IAM best practices such as using multi-factor authentication (MFA), regularly rotating credentials and encryption keys, and implementing role-based access control (RBAC).

- Authentication and Authorization: Verify that authentication and authorization policies adhere to the principle of least privilege.

- Defense in Depth: Implement admission controls at every layer including network, application and data.

- Vulnerability & Penetration Testing: Conduct security tests targeting vulnerabilities based on the threat model for the service’s functionality.

- Encryption: Implement encryption at rest and in-transit policies.

- Security Testing Tools: Use tools like OWASP ZAP, Nessus, Acunetix, Qualys, Synk and Burp Suite for security testing [See OWASP Top Ten, CWE TOP 25].

Loading Testing

- Test Plan: Ensure test plan accurately simulate real use cases, including varying data sizes and read/write operations.

- Scalability Assessment: Conduct load tests to assess the scalability of both your primary service and its dependencies.

- Testing Strategies: Conduct load tests using both mock dependent services and real services to identify potential bottlenecks.

- Resource Monitoring: During load testing, monitor for excessive logs, events, and other resources, and assess their impact on latency and potential bottlenecks.

- Autoscaling Validation: Validate on-demand autoscaling policies by testing them under increased load conditions.

Chaos Testing

Chaos testing involves injecting faults into the system to test its resilience and ensure it can recover gracefully [See Fault Injection Testing and Mocking and Fuzz Testing].

- Service Unavailability: Test scenarios where the dependent service is unavailable, experiences high latency, or results in a higher number of faults.

- Monitoring and Alarms: Ensure that monitoring, alarms and on-call procedures for troubleshooting and recovery are functioning as intended.

Canary Testing and Continuous Validation

This strategy involves deploying a new version of a service to a limited subset of users or servers with real-time monitoring and validation before a full deployment.

- Canary Test Validation: Ensure canary tests based on real use cases and validate functional and non-functional behavior of the service. If a canary test fails, it should automatically trigger a rollback and halt further deployments until the underlying issues are resolved.

- Continuous Validation: Continuously validate API behavior and monitor performance metrics such as latency, error rates, and resource utilization.

- Edge Case Testing: Canary tests should include common and edge cases such as large request size.

Resilience and Reliability

- Idle Timeout Configuration: Set your API server’s idle connection timeout slightly longer than the load balancer’s idle timeout.

- Load Balancer Configuration: Ensure the load balancer evenly distributes requests among servers using a round-robin method and avoids directing traffic to unhealthy hosts. Prefer this approach over least-connections method.

- Backward Compatibility: Ensure API changes are backward compatible that are verified through Contract-based testing, and forward compatible by ignoring unknown properties.

- Correlation ID Injection: Inject a Correlation ID into incoming requests, allowing it to be propagated through all dependent services for logging and tracing purposes.

- Graceful Degradation: Implement graceful degradation to operate in a limited capacity even when dependent services are down.

- Idempotent APIs: Ensure APIs especially those that create resources are implemented with idempotent behavior.

- Request Validation: Validate all request parameters and fail fast any requests that are malformed, improperly sized, or contain malicious data.

- Single Points of Failure: Eliminate single points of failure, bottlenecks, and dependencies on shared resources to minimize the blast radius.

- Cold Start Optimization: Ensure that cold service startup time is limited to just a few seconds.

Performance Optimization

- Latency Reduction: Identify and optimize parts of the system with high latency, such as database queries, network calls, or computation-heavy tasks.

- Pagination: Implement pagination for list operations, ensuring that pagination tokens are account-specific and invalid after the query expiration time.

- Thread and Queue Management: Set up the number of threads, connections, and queuing limits. Generally, the queue size should be proportional to the number of threads and kept small.

- Resource Optimization: Optimize resource usage (e.g., CPU, memory, disk) by tuning configuration settings and optimizing code paths to reduce unnecessary overhead.

- Caching Strategy: Review and optimize caching strategies to reduce load on databases and services, ensuring that cached data is used effectively without becoming stale.

- Database Indexing: Regularly review and update database indexing strategies to ensure queries run efficiently and data retrieval is optimized.

Throttling and Rate Limiting

Below are some best practices for throttling and rate limiting [See Effective Load Shedding and Throttling Strategies]:

- Web Application Firewall: Consider implementing Web application firewall integration with your services’ load balancers to enhance security, traffic management and protect against distributed denial-of-service (DDoS). Confirm WAF settings and assess performance through load and security testing.

- Testing Throttling Limits: Test throttling and rate limiting policies in the test environment.

- Granular Limits: Implement tenant-level rate limits at the API endpoint level to prevent the noisy neighbor problem, and ensure that tenant context is passed to downstream services to enforce similar limits.

- Aggregated Limits: When setting rate limits for both tenant-level and API-levels, ensure that the tenant-level limits exceed the combined total of all API limits.

- Graceful degradation: Cache throttling and rate limit data to enable graceful degradation with fail-open if datastore retrieval fails.

- Unauthenticated requests: Minimize processing for unauthenticated requests and safeguard against large payloads and invalid parameters.

Dependent Services

- Timeout and Retry Configuration: Configure connection and request timeouts, implement retries with backoff and circuit-breaker, and set up fallback mechanisms for API clients with circuit breakers when connecting to dependent services.

- Monitoring and Logging: Monitor and log failures and latency of dependent services and infrastructure components such as load balancers, and trigger alarms when they exceed the defined SLOs.

- Scalability of Dependent Service: Verify that dependent services can cope with increased traffic loads during scaling traffic.

Compliance and Privacy

Below are some best practices for ensuring compliance:

- Compliance: Ensure all data compliance to local regulations such as California Consumer Privacy Act (CCPA), General Data Protection Regulation (GDPR), Health Insurance Portability and Accountability Act (HIPAA), and other privacy regulations [See NIST SP 800-122].

- Privacy: Identify and classify Personal Identifiable Information (PII), and ensure all data access is protected through Identity and Access Management (IAM) and compliance based PII policies [See DHS Guidance].

- Privacy by design: Incorporate privacy by design principles into every stage of development to reduce the risk of data breaches.

- Audit Logs: Maintain logs for all administrative actions, access to sensitive data and changes to critical configurations for compliance audit trails.

- Monitoring: Continuously monitor of compliance requirements to ensure ongoing adherence to regulations.

Data Management

- Data Consistency: Evaluate requirements for the data consistency such as strong and eventual consistency. Ensure data is consistently stored across multiple data stores, and implement a reconciliation process to detect any inconsistencies or lag times, logging them for monitoring and alerting purposes.

- Schema Compatibility: Ensure data schema changes are both backward and forward compatible by implementing a two-phase release process. First, deploy an intermediate version that can read the new schema format but continues to write in the old format. Once this intermediate version is fully deployed and stable, proceed to roll out the new code that writes data in the new format.

- Retention Policies: Establish and verify data retention policies across all datasets.

- Unique Data IDs: Ensure data IDs are unique and do not overflow especially when using 32-bit or smaller integers.

- Auto-scaling Testing: Test auto-scaling policies triggered by traffic spikes, and confirm proper partitioning/sharding across scaled resources.

- Data Cleanup: Clean up stale data, logs and other resources that have expired or are no longer needed.

- Divergence Monitoring: Implement automated processes to identify divergence from data consistency or high lag time with data synchronization when working with multiple data stores.

- Data Migration Testing: Test data migrations in isolated environments to ensure they can be performed without data loss or corruption.

- Backup and Recovery: Test backup and recovery processes to confirm they meet defined Recovery Point Objective (RPO) and Recovery Time Objective (RTO) targets.

- Data Masking: Implement data masking in non-production environments to protect sensitive information.

Caching

Here are some best practices for caching strategies [See When Caching is not a Silver Bullet]:

- Stale Cache Handling: Handle stale cache data by setting appropriate time-to-live (TTL) values and ensuring cache invalidation is correctly implemented.

- Cache Preloading: Pre-load cache before significant traffic spikes so that latency can be minimized.

- Cache Validation: Validate the effectiveness of your cache invalidation and clearing methods.

- Negative Cache: Implement caching behavior for both positive and negative use cases and monitor the cache hits and misses.

- Peak Traffic Testing: Assess service performance under peak traffic conditions without caching.

- Bimodal Behavior: Minimize reliance on caching to reduce the complexity of bimodal logic paths.

Disaster Recovery

- Backup Validation: Regularly test backup and recovery processes to ensure they meet defined Recovery Point Objective (RPO) and Recovery Time Objective (RTO) targets.

- Failover Testing: Test failover procedures for critical services to validate that they can seamlessly switch over to backup systems or regions without service disruption.

- Chaos Engineering: Incorporate chaos engineering practices to simulate disaster scenarios and validate the resilience of your systems under failure conditions.

Configuration and Feature-Flags

- Configuration Storage: Prefer storing configuration changes in a source code repository and releasing them gradually through a deployment pipeline including tests for verification.

- Configuration Validation: Validate configuration changes in a test environment before applying them in production to avoid misconfigurations that could cause outages.

- Feature Management: Use a centralized feature flag management system to maintain consistency across environments and easily roll back features if necessary.

- Testing Feature Flags: Test every combination of feature flags comprehensively in both test and pre-production environments before the release.

Observability

Observability allows instrumenting systems to collect and analyze logs metrics and trace for monitoring system performance and health. Below are some best practices for monitoring, logging, tracing and alarms [See USE and RED methodologies for Systems Performance]:

Monitoring

- System Metrics: Monitor key system metrics such as CPU usage, memory usage, disk I/O, network latency, and throughput across all nodes in your distributed system.

- Application Metrics: Track application-specific metrics like request latency, error rates, throughput, and the performance of critical application functions.

- Server Faults and Client Errors: Monitor metrics for server-side faults (5XX) and client-side errors (4XX) including those from dependent services.

- Service Level Objectives (SLOs): Define and monitor SLOs for latency, availability, and error rates. Use these to trigger alerts if the system’s performance deviates from expected levels.

- Health Checks: Implement regular health checks to assess the status of services and underlying infrastructure, including database connections and external dependencies.

- Dashboards: Use dashboards to display real-time and historical graphs for throughput, P9X latency, faults/errors, data size, and other service metrics, with the ability to filter by tenant ID.

Logging

- Structured Logging: Ensure logs are structured and include essential information such as timestamps, correlation IDs, user IDs, and relevant request/response data.

- Log API entry and exits: Log the start and completion of API invocations along with correlation IDs for tracing purpose.

- Log Retention: Define and enforce log retention policies to avoid storage overuse and ensure compliance with data regulations.

- Log Aggregation: Use log aggregation tools to centralize logs from different services and nodes, making it easier to search and analyze them in real-time.

- Log Levels: Properly categorize logs (e.g., DEBUG, INFO, WARN, ERROR) and ensure sensitive information (such as PII) is not logged.

Tracing

- Distributed Tracing: Implement distributed tracing to capture end-to-end latency and the flow of requests across multiple services. This helps in identifying bottlenecks and understanding dependencies between services.

- Trace Sampling: Use trace sampling to manage the volume of tracing data, capturing detailed traces for a subset of requests to balance observability and performance.

- Trace Context Propagation: Ensure that trace context (e.g., trace IDs, span IDs) is propagated across all services, allowing complete trace reconstruction.

Alarms

- Threshold-Based Alarms: Set up alarms based on predefined thresholds for key metrics such as CPU/memory/disk/network usage, latency, error rates, throughput, starvation of threads and database connections, etc. Ensure that alarms are actionable and not too sensitive to avoid alert fatigue.

- Anomaly Detection: Implement anomaly detection to identify unusual patterns in metrics or logs that might indicate potential issues before they lead to outages.

- Metrics Isolation: Keep metrics and alarms from continuous canary tests and dependent services separate from those generated by real traffic.

- On-Call Rotation: Ensure that alarms trigger appropriate notifications to on-call personnel, and maintain a rotation schedule to distribute the on-call load among team members.

- Runbook Integration: Include runbooks with alarms to provide on-call engineers with guidance on how to investigate and resolve issues.

Rollback and Roll Forward

Rolling back involves redeploying a previous version to undo unwanted changes. Rolling forward involves pushing a new commit with the fix and deploying it. Here are some best practices for rollback and roll forward:

- Immutable infrastructure: Implement immutable infrastructure practices so that switching back to a previous instance is simple.

- Automated Rollbacks: Ensure rollbacks are automated so that they can be executed quickly and reliably without human intervention.

- Rollback Testing: Test rollback changes in a test environment to ensure the code and data can be safely reverted.

- Critical bugs: To prevent customer impact, avoid rolling back if the changes involve critical bug fixes or compliance and security-related updates.

- Schema changes: If the new code introduced schema changes, confirm that the previous version can still read and update the modified data.

- Roll Forward: Use rolling forward when rollback isn’t possible.

- Avoid rushing Roll Forwards: Avoid roll forward if other changes have been committed that still being tested.

- Testing Roll Forwards: Make sure the new changes including configuration updates are thoroughly tested before the roll forward.

Documentation and Knowledge Sharing

- Operational Runbooks: Maintain comprehensive runbooks that document operational procedures, troubleshooting steps, and escalation paths for common issues.

- Postmortems: Conduct postmortems after incidents to identify root causes, share lessons learned, and implement corrective actions to prevent recurrence.

- Knowledge Base: Build and maintain a knowledge base with documentation on system architecture, deployment processes, testing strategies, and best practices for new team members and ongoing reference.

- Training and Drills: Regularly train the team on disaster recovery procedures, runbooks, and incident management. Conduct disaster recovery drills to ensure readiness for actual incidents.

Continuous Improvement

- Feedback Loops: Establish feedback loops between development, operations, and security teams to continuously improve deployment processes and system reliability.

- Metrics Review: Regularly review metrics, logs, and alarms to identify trends, optimize configurations, and enhance system performance.

- Automation: Automate repetitive tasks, such as deployments, monitoring setup, and incident response, to reduce human error and increase efficiency.

Conclusion

Releasing software in distributed systems presents unique challenges due to the complexity and scale of production environments, which cannot be fully replicated in testing. By adhering to the practices outlined in this checklist—such as canary releases, feature flags, comprehensive observability, rigorous scalability testing, and well-prepared rollback mechanisms—you can significantly reduce the risks associated with deploying new code. A strong DevOps culture, where development, operations, and security teams work closely together, ensures continuous improvement and adaptability to new challenges. By following this checklist and fostering a culture of collaboration, you can enhance the stability, security, and scalability of each release for your platform.