Background

I have long been interested in the Adaptive Object Model (AOM) pattern and used it in a couple of projects in early 2000s. I have also written about this pattern earlier, which emerged from the work of Ralph Johnson and his colleagues in the late 1990s. It addresses a fundamental challenge in software architecture: how to build systems that can evolve structurally without code changes or downtime. The pattern draws heavily from several foundational concepts in computer science and software engineering. The roots of AOM can be traced back to several influential ideas:

- Reflection and Metaprogramming: Early Lisp systems showed the power of treating code as data, enabling programs to modify themselves at runtime. This concept heavily influenced the AOM pattern’s approach to treating metadata as first-class objects.

- Type Theory: The work of pioneers like Alonzo Church and Haskell Curry on type systems provided the theoretical foundation for the “type square” pattern that forms AOM’s core structure, where types themselves become objects that can be manipulated.

- Database Systems: The entity-attribute-value (EAV) model used in database design influenced AOM’s approach to storing flexible data structures.

Related Patterns

Following are other patterns that are related to AOM:

- Facade Pattern: AOM often employs facades to provide simplified interfaces over complex meta-object structures, hiding the underlying complexity from client code.

- Strategy Pattern: The dynamic binding of operations in AOM naturally implements the Strategy pattern, allowing algorithms to be selected and modified at runtime.

- Composition over Inheritance: AOM uses the principle of favoring composition over inheritance by building complex objects from simpler, configurable components rather than rigid class hierarchies.

- Domain-Specific Languages (DSLs): Many AOM implementations provide DSLs for defining entity types and relationships, making the system accessible to domain experts rather than just programmers.

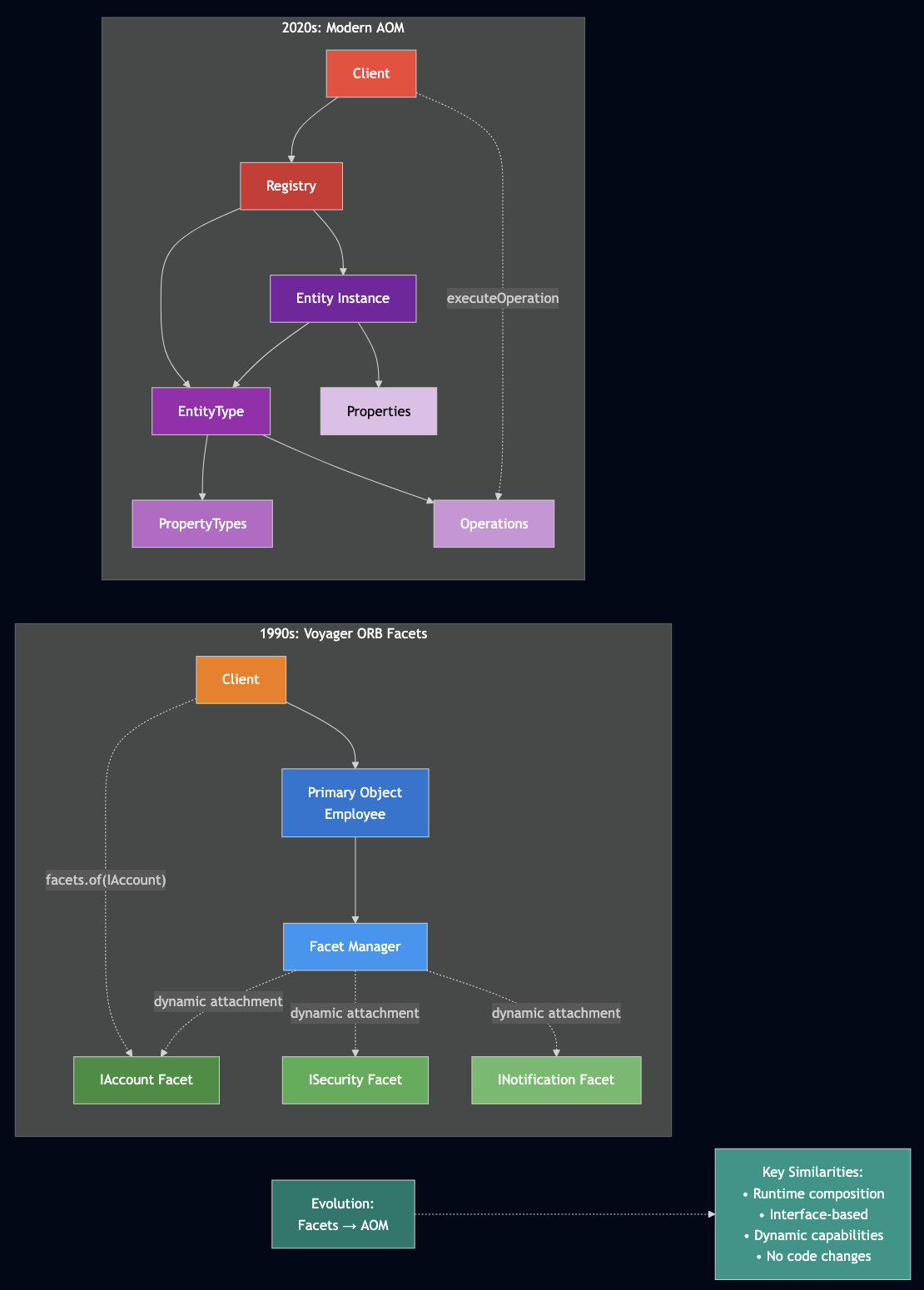

Voyager ORB’s Dynamic Aggregation

In late 1990s/early 2000s, I used Voyager ORB for some personal projects that pioneered a concept of “Dynamic Aggregation” – the ability to attach secondary objects, called facets, to primary objects at runtime. This system demonstrated several key principles that later influenced AOM development:

- Runtime Object Extension: Objects could be extended with new capabilities without modifying their original class definitions:

// Voyager ORB example - attaching an account facet to an employee

IEmployee employee = new Employee("joe", "234-44-2678");

IFacets facets = Facets.of(employee);

IAccount account = (IAccount) facets.of(IAccount.class);

account.deposit(2000);

- Interface-based Composition: Facets were accessed through interfaces, providing a clean separation between capability and implementation – a principle central to modern AOM.

- Distributed Object Mobility: Voyager‘s facet system worked seamlessly across network boundaries, allowing objects and their attached capabilities to move between different machines while maintaining their extended functionality.

- Automatic Proxy Generation: Like modern AOM systems, Voyager automatically generated the necessary plumbing code at runtime, using Java’s reflection and bytecode manipulation capabilities.

The Voyager approach influenced distributed computing patterns and demonstrated that dynamic composition could work reliably in production systems. The idea of attaching behavior at runtime through well-defined interfaces is directly applicable to modern AOM implementations. The key insight from Voyager was that objects don’t need to know about all their potential capabilities at compile time. Instead, capabilities can be discovered, attached, and composed dynamically based on runtime requirements – a principle that AOM extends to entire domain models.

Introduction to Adaptive Object Model

Adaptive Object Model is an architectural pattern used in domains requiring dynamic manipulation of metadata and business rules. Unlike traditional object-oriented design where class structures are fixed at compile time, AOM treats class definitions, attributes, relationships, and even business rules as data that can be modified at runtime.

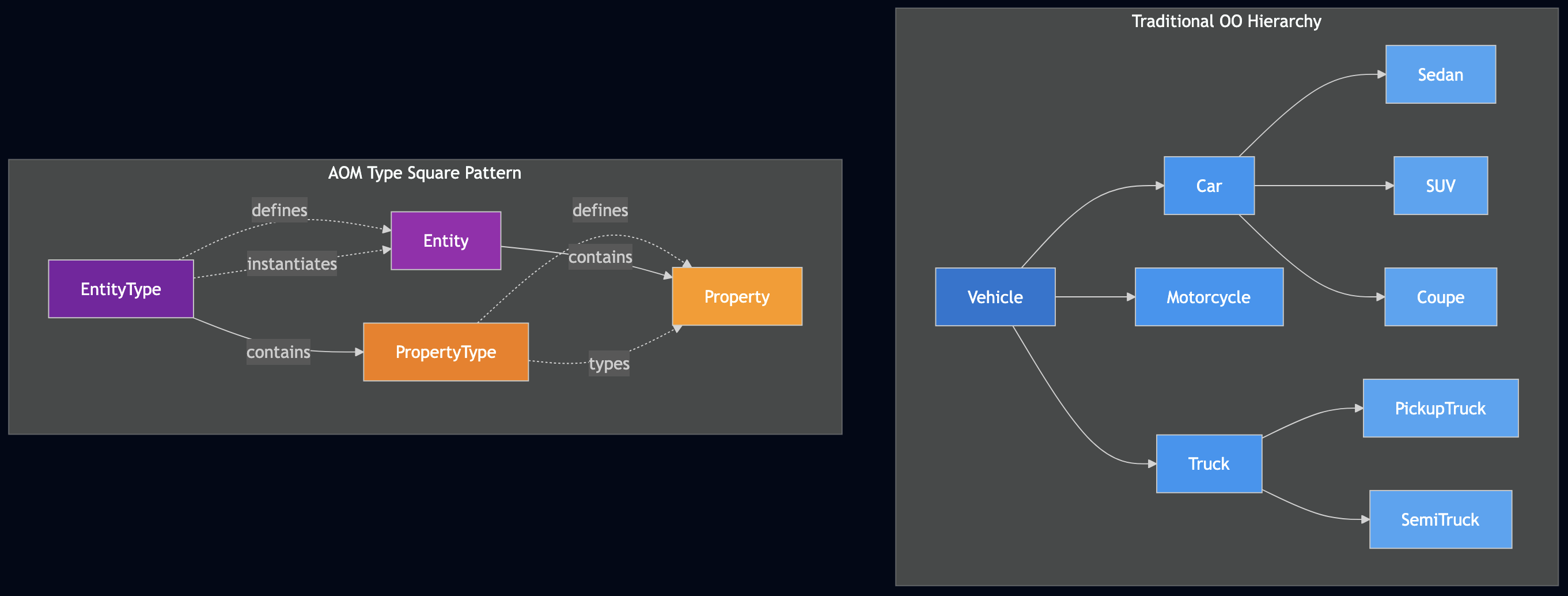

Consider our vehicle example again. In traditional OO design, you might have:

Vehicle

??? Car

? ??? Sedan

? ??? SUV

? ??? Coupe

??? Motorcycle

??? Truck

??? PickupTruck

??? SemiTruck

With AOM, instead of predefined inheritance hierarchies, we use the “type square” pattern:

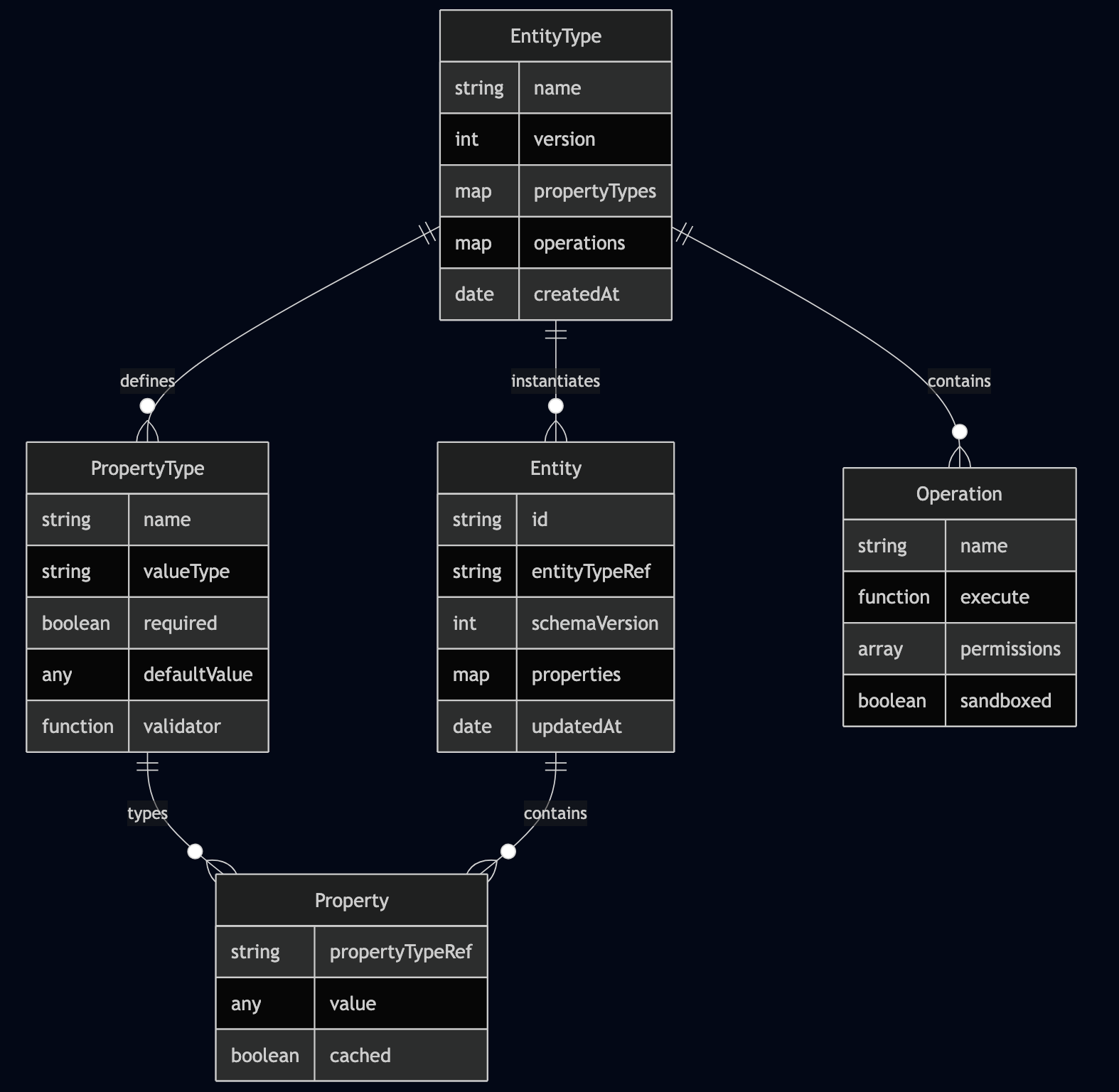

- EntityType: Represents what would traditionally be a class

- Entity: Represents what would traditionally be an object instance

- PropertyType: Defines the schema for attributes

- Property: Holds actual attribute values

This meta-model allows for unlimited extensibility without code changes, making it ideal for domains with rapidly evolving requirements or where different customers need different data models.

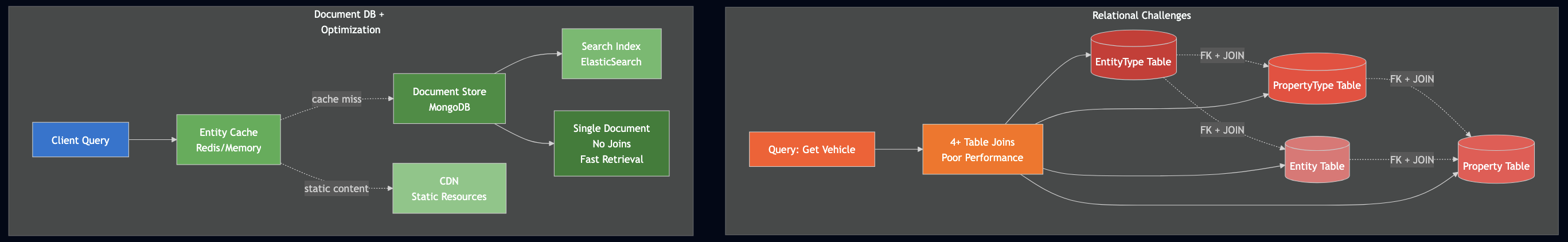

The Database Challenge: From Relational to Document

Traditional relational databases present significant challenges for AOM implementations:

- Excessive Joins: In a relational AOM implementation, reconstructing a single business object requires joining multiple tables:

- Entity table (object instances)

- Property table (attribute values)

- PropertyType table (attribute metadata)

- EntityType table (type definitions)

- Schema Rigidity: Relational schemas require predefined table structures, which conflicts with AOM’s goal of runtime flexibility.

- Performance Issues: The EAV (Entity-Attribute-Value) pattern commonly used in relational AOM implementations suffers from poor query performance due to the lack of indexing on the “value” column’s varied data types.

- Complex Queries: Simple business queries become complex multi-table joins with numerous conditions, making the system difficult to optimize and maintain.

The Document Database Solution

Document databases like MongoDB naturally align with AOM principles:

- Schema Flexibility: Documents can contain arbitrary fields without predefined schemas, allowing entity types to evolve dynamically.

- Nested Structures: Complex relationships and metadata can be stored within documents, reducing the need for joins.

- Rich Querying: Modern document databases provide sophisticated query capabilities while maintaining flexibility.

- Indexing: Flexible indexing strategies can be applied to document fields as needed.

Rust Implementation

Let’s implement AOM in Rust, taking advantage of its type safety while maintaining flexibility through traits and enums. Rust’s ownership model and pattern matching make it particularly well-suited for safe metaprogramming.

use std::collections::HashMap;

use serde::{Serialize, Deserialize};

use std::sync::Arc;

// Type-safe property values using enums

#[derive(Debug, Clone, Serialize, Deserialize)]

pub enum PropertyValue {

String(String),

Integer(i64),

Float(f64),

Boolean(bool),

Date(chrono::DateTime<chrono::Utc>),

}

impl PropertyValue {

pub fn type_name(&self) -> &'static str {

match self {

PropertyValue::String(_) => "String",

PropertyValue::Integer(_) => "Integer",

PropertyValue::Float(_) => "Float",

PropertyValue::Boolean(_) => "Boolean",

PropertyValue::Date(_) => "Date",

}

}

}

// Property type definition

#[derive(Debug, Clone, Serialize, Deserialize)]

pub struct PropertyType {

pub name: String,

pub value_type: String,

pub required: bool,

pub default_value: Option<PropertyValue>,

}

impl PropertyType {

pub fn new(name: &str, value_type: &str, required: bool) -> Self {

Self {

name: name.to_string(),

value_type: value_type.to_string(),

required,

default_value: None,

}

}

}

// Property instance

#[derive(Debug, Clone, Serialize, Deserialize)]

pub struct Property {

pub property_type: String, // Reference to PropertyType name

pub value: PropertyValue,

}

impl Property {

pub fn new(property_type: &str, value: PropertyValue) -> Self {

Self {

property_type: property_type.to_string(),

value,

}

}

}

// Operation trait for dynamic behavior

pub trait Operation: Send + Sync + std::fmt::Debug {

fn execute(&self, entity: &Entity, args: &[PropertyValue]) -> Result<PropertyValue, String>;

fn name(&self) -> &str;

}

// Entity type definition

#[derive(Debug, Clone, Serialize, Deserialize)]

pub struct EntityType {

pub name: String,

pub property_types: HashMap<String, PropertyType>,

#[serde(skip)]

pub operations: HashMap<String, Arc<dyn Operation>>,

}

impl EntityType {

pub fn new(name: &str) -> Self {

Self {

name: name.to_string(),

property_types: HashMap::new(),

operations: HashMap::new(),

}

}

pub fn add_property_type(&mut self, property_type: PropertyType) {

self.property_types.insert(property_type.name.clone(), property_type);

}

pub fn add_operation(&mut self, operation: Arc<dyn Operation>) {

self.operations.insert(operation.name().to_string(), operation);

}

pub fn get_property_type(&self, name: &str) -> Option<&PropertyType> {

self.property_types.get(name)

}

}

// Entity instance

#[derive(Debug, Clone, Serialize, Deserialize)]

pub struct Entity {

pub entity_type: String, // Reference to EntityType name

pub properties: HashMap<String, Property>,

}

impl Entity {

pub fn new(entity_type: &str) -> Self {

Self {

entity_type: entity_type.to_string(),

properties: HashMap::new(),

}

}

pub fn add_property(&mut self, property: Property) {

self.properties.insert(property.property_type.clone(), property);

}

pub fn get_property(&self, name: &str) -> Option<&PropertyValue> {

self.properties.get(name).map(|p| &p.value)

}

pub fn set_property(&mut self, name: &str, value: PropertyValue) {

if let Some(property) = self.properties.get_mut(name) {

property.value = value;

}

}

}

// Registry to manage types and instances

pub struct EntityRegistry {

entity_types: HashMap<String, EntityType>,

entities: HashMap<String, Entity>,

}

impl EntityRegistry {

pub fn new() -> Self {

Self {

entity_types: HashMap::new(),

entities: HashMap::new(),

}

}

pub fn register_type(&mut self, entity_type: EntityType) {

self.entity_types.insert(entity_type.name.clone(), entity_type);

}

pub fn create_entity(&mut self, type_name: &str, id: &str) -> Result<(), String> {

if !self.entity_types.contains_key(type_name) {

return Err(format!("Unknown entity type: {}", type_name));

}

let entity = Entity::new(type_name);

self.entities.insert(id.to_string(), entity);

Ok(())

}

// New method to get a mutable reference to an entity

pub fn get_entity_mut(&mut self, id: &str) -> Option<&mut Entity> {

self.entities.get_mut(id)

}

pub fn execute_operation(

&self,

entity_id: &str,

operation_name: &str,

args: &[PropertyValue]

) -> Result<PropertyValue, String> {

let entity = self.entities.get(entity_id)

.ok_or_else(|| format!("Entity not found: {}", entity_id))?;

let entity_type = self.entity_types.get(&entity.entity_type)

.ok_or_else(|| format!("Entity type not found: {}", entity.entity_type))?;

let operation = entity_type.operations.get(operation_name)

.ok_or_else(|| format!("Operation not found: {}", operation_name))?;

operation.execute(entity, args)

}

}

// Example operations

#[derive(Debug)]

struct DriveOperation;

impl Operation for DriveOperation {

fn execute(&self, entity: &Entity, _args: &[PropertyValue]) -> Result<PropertyValue, String> {

if let Some(PropertyValue::String(maker)) = entity.get_property("maker") {

Ok(PropertyValue::String(format!("Driving the {} vehicle", maker)))

} else {

Ok(PropertyValue::String("Driving vehicle".to_string()))

}

}

fn name(&self) -> &str {

"drive"

}

}

#[derive(Debug)]

struct MaintenanceOperation;

impl Operation for MaintenanceOperation {

fn execute(&self, entity: &Entity, _args: &[PropertyValue]) -> Result<PropertyValue, String> {

if let Some(PropertyValue::Integer(miles)) = entity.get_property("miles") {

let next_maintenance = miles + 5000;

Ok(PropertyValue::String(format!("Next maintenance due at {} miles", next_maintenance)))

} else {

Ok(PropertyValue::String("Maintenance scheduled".to_string()))

}

}

fn name(&self) -> &str {

"perform_maintenance"

}

}

// Usage example

fn example_usage() -> Result<(), String> {

let mut registry = EntityRegistry::new();

// Define vehicle type

let mut vehicle_type = EntityType::new("Vehicle");

vehicle_type.add_property_type(PropertyType::new("maker", "String", true));

vehicle_type.add_property_type(PropertyType::new("model", "String", true));

vehicle_type.add_property_type(PropertyType::new("year", "Integer", true));

vehicle_type.add_property_type(PropertyType::new("miles", "Integer", false));

vehicle_type.add_operation(Arc::new(DriveOperation));

vehicle_type.add_operation(Arc::new(MaintenanceOperation));

registry.register_type(vehicle_type);

// Create a new entity instance

registry.create_entity("Vehicle", "vehicle_1")?;

// Get a mutable reference to the new entity and set its properties

if let Some(car) = registry.get_entity_mut("vehicle_1") {

car.add_property(Property::new("maker", PropertyValue::String("Tesla".to_string())));

car.add_property(Property::new("model", PropertyValue::String("Model 3".to_string())));

car.add_property(Property::new("year", PropertyValue::Integer(2022)));

car.add_property(Property::new("miles", PropertyValue::Integer(15000)));

}

// Execute the drive operation and print the result

let drive_result = registry.execute_operation("vehicle_1", "drive", &[])?;

println!("Drive operation result: {:?}", drive_result);

// Execute the maintenance operation and print the result

let maintenance_result = registry.execute_operation("vehicle_1", "perform_maintenance", &[])?;

println!("Maintenance operation result: {:?}", maintenance_result);

Ok(())

}

fn main() {

match example_usage() {

Ok(_) => println!("Example completed successfully."),

Err(e) => eprintln!("Error: {}", e),

}

}

The Rust implementation provides several advantages:

- Type Safety: Enum-based property values ensure type safety while maintaining flexibility.

- Memory Safety: Rust’s ownership model prevents common memory issues found in dynamic systems.

- Performance: Zero-cost abstractions and compile-time optimizations.

- Concurrency: Built-in support for safe concurrent access to shared data.

TypeScript Implementation

TypeScript brings static typing to JavaScript’s dynamic nature, providing an excellent balance for AOM implementations:

// Type definitions for property values

type PropertyValue = string | number | boolean | Date;

interface PropertyType {

name: string;

valueType: string;

required: boolean;

defaultValue?: PropertyValue;

}

interface Property {

propertyType: string;

value: PropertyValue;

}

// Operation interface with proper typing

interface Operation {

name: string;

execute(entity: Entity, args: PropertyValue[]): PropertyValue;

}

// Generic constraint for entity properties

interface PropertyMap {

[key: string]: PropertyValue;

}

class EntityType {

private propertyTypes: Map<string, PropertyType> = new Map();

private operations: Map<string, Operation> = new Map();

constructor(public readonly typeName: string) {}

addPropertyType(propertyType: PropertyType): void {

this.propertyTypes.set(propertyType.name, propertyType);

}

addOperation(operation: Operation): void {

this.operations.set(operation.name, operation);

}

getPropertyType(name: string): PropertyType | undefined {

return this.propertyTypes.get(name);

}

getOperation(name: string): Operation | undefined {

return this.operations.get(name);

}

getAllPropertyTypes(): PropertyType[] {

return Array.from(this.propertyTypes.values());

}

// Type guard for property validation

validateProperty(name: string, value: PropertyValue): boolean {

const propertyType = this.getPropertyType(name);

if (!propertyType) return false;

switch (propertyType.valueType) {

case 'string':

return typeof value === 'string';

case 'number':

return typeof value === 'number';

case 'boolean':

return typeof value === 'boolean';

case 'date':

return value instanceof Date;

default:

return false;

}

}

}

class Entity {

private properties: Map<string, Property> = new Map();

constructor(public readonly entityType: EntityType) {

// Initialize with default values

entityType.getAllPropertyTypes().forEach(propType => {

if (propType.defaultValue !== undefined) {

this.setProperty(propType.name, propType.defaultValue);

}

});

}

setProperty(name: string, value: PropertyValue): boolean {

if (!this.entityType.validateProperty(name, value)) {

throw new Error(`Invalid property: ${name} with value ${value}`);

}

const propertyType = this.entityType.getPropertyType(name);

if (!propertyType) {

throw new Error(`Unknown property type: ${name}`);

}

this.properties.set(name, {

propertyType: name,

value

});

return true;

}

getProperty<T extends PropertyValue>(name: string): T | undefined {

const property = this.properties.get(name);

return property?.value as T;

}

executeOperation(operationName: string, args: PropertyValue[] = []): PropertyValue {

const operation = this.entityType.getOperation(operationName);

if (!operation) {

throw new Error(`Unknown operation: ${operationName}`);

}

return operation.execute(this, args);

}

// Dynamic property access with Proxy

static withDynamicAccess(entity: Entity): Entity & PropertyMap {

return new Proxy(entity, {

get(target, prop: string) {

if (prop in target) {

return (target as any)[prop];

}

return target.getProperty(prop);

},

set(target, prop: string, value: PropertyValue) {

try {

target.setProperty(prop, value);

return true;

} catch {

return false;

}

}

}) as Entity & PropertyMap;

}

}

// Enhanced operation implementations

class DriveOperation implements Operation {

name = 'drive';

execute(entity: Entity, args: PropertyValue[]): PropertyValue {

const maker = entity.getProperty<string>('maker') || 'Unknown';

const speed = args[0] as number || 60;

return `Driving the ${maker} at ${speed} mph`;

}

}

class MaintenanceOperation implements Operation {

name = 'performMaintenance';

execute(entity: Entity, args: PropertyValue[]): PropertyValue {

const miles = entity.getProperty<number>('miles') || 0;

const maintenanceType = args[0] as string || 'basic';

// Business logic for maintenance

const cost = maintenanceType === 'premium' ? 150 : 75;

const nextDue = miles + (maintenanceType === 'premium' ? 10000 : 5000);

return `${maintenanceType} maintenance completed. Cost: $${cost}. Next due: ${nextDue} miles`;

}

}

// Factory for creating entities with fluent interface

class EntityFactory {

private types: Map<string, EntityType> = new Map();

defineType(name: string): TypeBuilder {

return new TypeBuilder(name, this);

}

registerType(entityType: EntityType): void {

this.types.set(entityType.typeName, entityType);

}

createEntity(typeName: string): Entity {

const type = this.types.get(typeName);

if (!type) {

throw new Error(`Unknown entity type: ${typeName}`);

}

return Entity.withDynamicAccess(new Entity(type));

}

}

class TypeBuilder {

private entityType: EntityType;

constructor(typeName: string, private factory: EntityFactory) {

this.entityType = new EntityType(typeName);

}

property(name: string, type: string, required = false, defaultValue?: PropertyValue): TypeBuilder {

this.entityType.addPropertyType({ name, valueType: type, required, defaultValue });

return this;

}

operation(operation: Operation): TypeBuilder {

this.entityType.addOperation(operation);

return this;

}

build(): EntityType {

this.factory.registerType(this.entityType);

return this.entityType;

}

}

// Usage example with modern TypeScript features

const factory = new EntityFactory();

// Define vehicle type with fluent interface

factory.defineType('Vehicle')

.property('maker', 'string', true)

.property('model', 'string', true)

.property('year', 'number', true, 2024)

.property('miles', 'number', false, 0)

.property('isElectric', 'boolean', false, false)

.operation(new DriveOperation())

.operation(new MaintenanceOperation())

.build();

// Create and use vehicle with dynamic property access

const vehicle = factory.createEntity('Vehicle') as Entity & PropertyMap;

// Type-safe property access

vehicle.maker = 'Tesla';

vehicle.model = 'Model 3';

vehicle.isElectric = true;

console.log(vehicle.executeOperation('drive', [75]));

console.log(vehicle.executeOperation('performMaintenance', ['premium']));

// Dynamic property enumeration

Object.keys(vehicle).forEach(key => {

console.log(`${key}: ${vehicle[key]}`);

});

The TypeScript implementation provides:

- Gradual Typing: Mix dynamic and static typing as needed.

- Modern Language Features: Generics, type guards, Proxy objects, and fluent interfaces.

- Developer Experience: Excellent tooling support with autocomplete and type checking.

- Flexibility: Easy migration from JavaScript while adding type safety incrementally.

Enhanced Ruby Implementation

Ruby’s metaprogramming capabilities make it particularly well-suited for AOM. Let’s enhance the original implementation with modern Ruby features:

require 'date'

require 'json'

require 'securerandom'

# Enhanced PropertyType with validation

class PropertyType

attr_reader :name, :type, :required, :validator

def initialize(name, type, required: false, default: nil, &validator)

@name = name

@type = type

@required = required

@default = default

@validator = validator

end

def valid?(value)

return false if @required && value.nil?

return true if value.nil? && !@required

type_valid = case @type

when :string then value.is_a?(String)

when :integer then value.is_a?(Integer)

when :float then value.is_a?(Float) || value.is_a?(Integer)

when :boolean then [true, false].include?(value)

when :date then value.is_a?(Date) || value.is_a?(Time)

else true

end

type_valid && (@validator.nil? || @validator.call(value))

end

def default_value

@default.respond_to?(:call) ? @default.call : @default

end

end

# Enhanced EntityType with DSL

class EntityType

attr_reader :name, :property_types, :operations, :validations

def initialize(name, &block)

@name = name

@property_types = {}

@operations = {}

@validations = []

instance_eval(&block) if block_given?

end

# DSL methods

def property(name, type, **options, &validator)

@property_types[name] = PropertyType.new(name, type, **options, &validator)

end

def operation(name, &block)

@operations[name] = block

end

def validate(&block)

@validations << block

end

def valid_entity?(entity)

@validations.all? { |validation| validation.call(entity) }

end

def create_entity(**attributes)

Entity.new(self, attributes)

end

end

# Enhanced Entity with method delegation and validations

class Entity

attr_reader :entity_type, :id

def initialize(entity_type, attributes = {})

@entity_type = entity_type

@properties = {}

@id = attributes.delete(:id) || SecureRandom.uuid

# Set default values

@entity_type.property_types.each do |name, prop_type|

@properties[name] = prop_type.default_value unless prop_type.default_value.nil?

end

# Set provided attributes

attributes.each { |name, value| set_property(name, value) }

# Add dynamic methods for properties

create_property_methods

# Validate entity

validate!

end

def set_property(name, value)

prop_type = @entity_type.property_types[name]

raise ArgumentError, "Unknown property: #{name}" unless prop_type

raise ArgumentError, "Invalid value for #{name}" unless prop_type.valid?(value)

@properties[name] = value

# Removed the line `validate! if defined?(@properties)`

end

def get_property(name)

@properties[name]

end

def execute_operation(name, *args)

operation = @entity_type.operations[name]

raise ArgumentError, "Unknown operation: #{name}" unless operation

instance_exec(*args, &operation)

end

def to_h

@properties.dup.merge(entity_type: @entity_type.name, id: @id)

end

def to_json(*args)

to_h.to_json(*args)

end

private

def create_property_methods

@entity_type.property_types.each do |name, _|

# Getter

define_singleton_method(name) { get_property(name) }

# Setter

define_singleton_method("#{name}=") { |value| set_property(name, value) }

# Predicate method for boolean properties

if @entity_type.property_types[name].type == :boolean

define_singleton_method("#{name}?") { !!get_property(name) }

end

end

end

def validate!

# Check required properties

@entity_type.property_types.each do |name, prop_type|

if prop_type.required && @properties[name].nil?

raise ArgumentError, "Required property missing: #{name}"

end

end

# Run entity-level validations

unless @entity_type.valid_entity?(self)

raise ArgumentError, "Entity validation failed"

end

end

end

# Registry with persistence capabilities

class EntityRegistry

def initialize

@entity_types = {}

@entities = {}

end

def define_type(name, &block)

@entity_types[name] = EntityType.new(name, &block)

end

def create_entity(type_name, **attributes)

entity_type = @entity_types[type_name]

raise ArgumentError, "Unknown entity type: #{type_name}" unless entity_type

entity = entity_type.create_entity(**attributes)

@entities[entity.id] = entity

entity

end

def find_entity(id)

@entities[id]

end

def find_entities_by_type(type_name)

@entities.values.select { |entity| entity.entity_type.name == type_name }

end

def export_to_json

{

entity_types: @entity_types.keys,

entities: @entities.values.map(&:to_h)

}.to_json

end

end

# Usage example with modern Ruby features

registry = EntityRegistry.new

# Define vehicle type with validations

registry.define_type('Vehicle') do

property :maker, :string, required: true

property :model, :string, required: true

property :year, :integer, required: true do |year|

year.between?(1900, Date.today.year + 1)

end

property :miles, :integer, default: 0 do |miles|

miles >= 0

end

property :electric, :boolean, default: false

# Entity-level validation

validate do |entity|

# Electric vehicles should have zero emissions

!entity.electric? || entity.year >= 2010

end

operation :drive do |distance = 10|

current_miles = miles || 0

self.miles = current_miles + distance

"Drove #{distance} miles in #{maker} #{model}. Total miles: #{miles}"

end

operation :maintenance do |type = 'basic'|

cost = type == 'premium' ? 150 : 75

next_due = miles + (type == 'premium' ? 10000 : 5000)

"#{type.capitalize} maintenance completed for #{maker} #{model}. " \

"Cost: $#{cost}. Next maintenance due at #{next_due} miles."

end

end

# Create and use vehicles

tesla = registry.create_entity('Vehicle',

maker: 'Tesla',

model: 'Model S',

year: 2023,

electric: true

)

toyota = registry.create_entity('Vehicle',

maker: 'Toyota',

model: 'Camry',

year: 2022

)

# Use dynamic methods

puts tesla.execute_operation(:drive, 50)

puts toyota.execute_operation(:maintenance, 'premium')

# Access properties naturally

puts "#{tesla.maker} #{tesla.model} is electric: #{tesla.electric?}"

puts "Toyota has #{toyota.miles} miles"

# Export to JSON

puts registry.export_to_json

MongoDB Integration

Modern document databases like MongoDB provide natural storage for AOM entities. Here’s how to integrate AOM with MongoDB:

import { MongoClient, Collection, Db } from 'mongodb';

interface MongoEntity {

_id?: string;

entityType: string;

properties: Record<string, any>;

createdAt: Date;

updatedAt: Date;

}

interface MongoEntityType {

_id?: string;

name: string;

propertyTypes: Record<string, any>;

version: number;

createdAt: Date;

}

class MongoEntityStore {

private db: Db;

private entitiesCollection: Collection<MongoEntity>;

private typesCollection: Collection<MongoEntityType>;

constructor(db: Db) {

this.db = db;

this.entitiesCollection = db.collection('entities');

this.typesCollection = db.collection('entity_types');

}

async saveEntityType(entityType: EntityType): Promise<void> {

const mongoType: MongoEntityType = {

name: entityType.typeName,

propertyTypes: Object.fromEntries(

entityType.getAllPropertyTypes().map(pt => [pt.name, pt])

),

version: 1,

createdAt: new Date()

};

await this.typesCollection.replaceOne(

{ name: entityType.typeName },

mongoType,

{ upsert: true }

);

}

async saveEntity(entity: Entity): Promise<string> {

const mongoEntity: MongoEntity = {

entityType: entity.entityType.typeName,

properties: this.serializeProperties(entity),

createdAt: new Date(),

updatedAt: new Date()

};

const result = await this.entitiesCollection.insertOne(mongoEntity);

return result.insertedId.toString();

}

async findEntitiesByType(typeName: string): Promise<any[]> {

return await this.entitiesCollection

.find({ entityType: typeName })

.toArray();

}

async findEntity(id: string): Promise<MongoEntity | null> {

return await this.entitiesCollection.findOne({ _id: id as any });

}

async updateEntity(id: string, updates: Record<string, any>): Promise<void> {

await this.entitiesCollection.updateOne(

{ _id: id as any },

{

$set: {

...updates,

updatedAt: new Date()

}

}

);

}

// Complex queries using MongoDB aggregation

async getEntityStatistics(typeName: string): Promise<any> {

return await this.entitiesCollection.aggregate([

{ $match: { entityType: typeName } },

{

$group: {

_id: '$entityType',

count: { $sum: 1 },

avgMiles: { $avg: '$properties.miles' },

makers: { $addToSet: '$properties.maker' }

}

}

]).toArray();

}

// Full-text search across entities

async searchEntities(query: string): Promise<MongoEntity[]> {

return await this.entitiesCollection

.find({ $text: { $search: query } })

.toArray();

}

private serializeProperties(entity: Entity): Record<string, any> {

const result: Record<string, any> = {};

entity.entityType.getAllPropertyTypes().forEach(pt => {

const value = entity.getProperty(pt.name);

if (value !== undefined) {

result[pt.name] = value;

}

});

return result;

}

}

// Usage with indexes for performance

async function setupDatabase() {

const client = new MongoClient('mongodb://localhost:27017');

await client.connect();

const db = client.db('aom_example');

const store = new MongoEntityStore(db);

// Create indexes for better performance

await db.collection('entities').createIndex({ entityType: 1 });

await db.collection('entities').createIndex({ 'properties.maker': 1 });

await db.collection('entities').createIndex({ 'properties.year': 1 });

await db.collection('entities').createIndex(

{

'properties.maker': 'text',

'properties.model': 'text'

}

);

return store;

}

Benefits of Document Storage

- Schema Evolution: MongoDB’s flexible schema allows entity types to evolve without database migrations.

- Rich Querying: MongoDB’s query language supports complex operations on nested documents.

- Indexing Strategy: Flexible indexing on any field, including nested properties.

- Aggregation Pipeline: Powerful analytics capabilities for business intelligence.

- Horizontal Scaling: Built-in sharding support for handling large datasets.

Modern Applications and Future Directions

Contemporary Usage Patterns

- Configuration Management: Modern applications use AOM-like patterns for feature flags, A/B testing configurations, and user preference systems.

- API Gateway Configuration: Services like Kong and AWS API Gateway use dynamic configuration patterns similar to AOM.

- Workflow Engines: Business process management systems employ AOM patterns to define configurable workflows.

- Multi-tenant SaaS: AOM enables SaaS applications to provide customizable data models per tenant.

Emerging Technologies

- GraphQL Schema Stitching: Dynamic schema composition shares conceptual similarities with AOM’s type composition.

- Serverless Functions: Event-driven architectures benefit from AOM’s dynamic behavior binding.

- Container Orchestration: Kubernetes uses similar patterns for dynamic resource management and configuration.

- Low-Code Platforms: Modern low-code solutions extensively use AOM principles for visual application building.

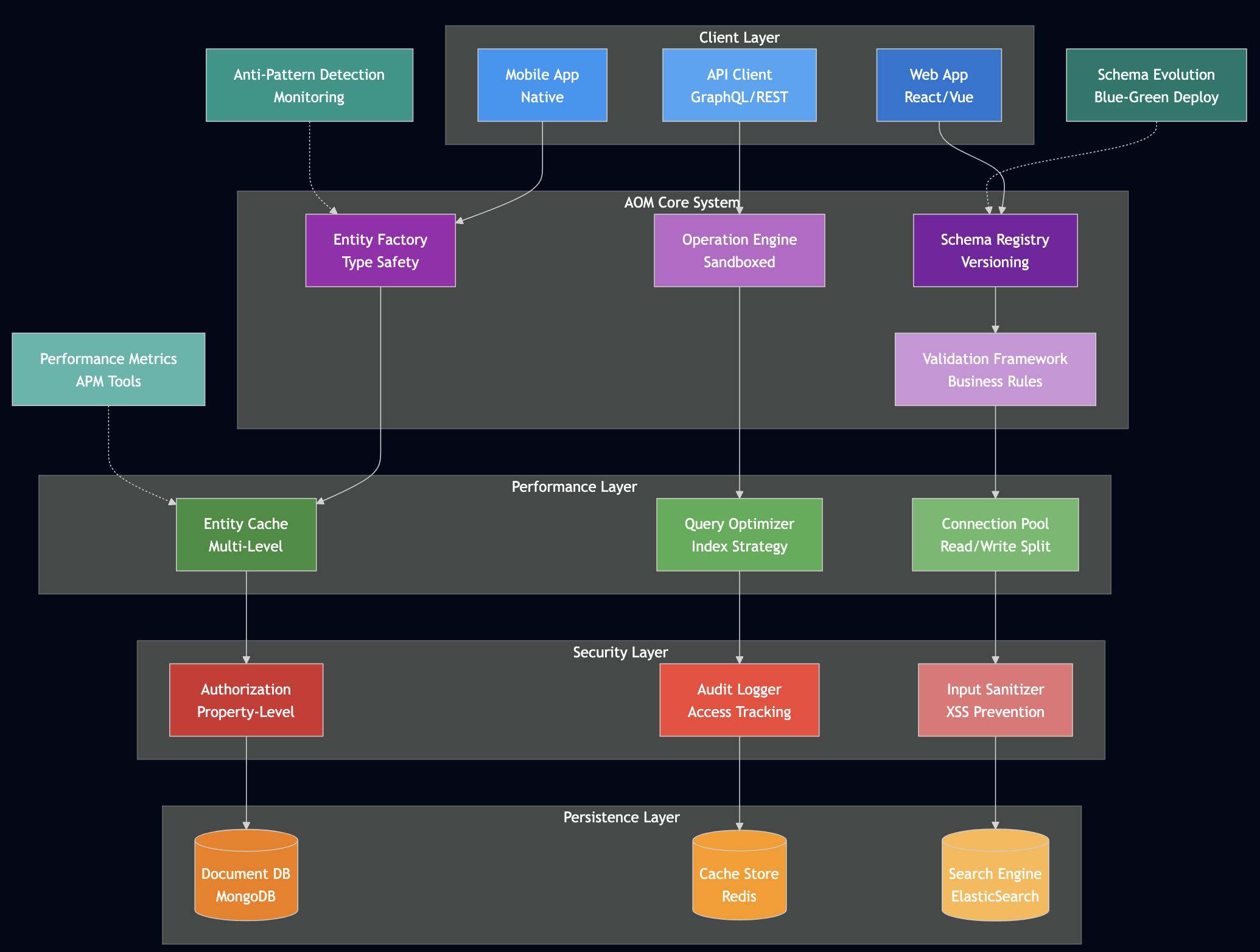

Performance Considerations and Optimizations

Caching Strategies

class CachedEntityStore {

private cache: Map<string, Entity> = new Map();

private typeCache: Map<string, EntityType> = new Map();

async getEntity(id: string): Promise<Entity | null> {

// Check cache first

if (this.cache.has(id)) {

return this.cache.get(id)!;

}

// Load from database

const entity = await this.store.findEntity(id);

if (entity) {

this.cache.set(id, entity);

}

return entity;

}

invalidateEntity(id: string): void {

this.cache.delete(id);

}

}

Lazy Loading and Materialized Views

For complex entity relationships, implement lazy loading and consider materialized views for frequently accessed computed properties.

Schema Evolution and Versioning

One of the most critical aspects of production AOM systems is managing schema evolution over time. Unlike traditional systems where database migrations handle schema changes, AOM systems must support dynamic evolution while maintaining data integrity and backward compatibility.

Version Management Strategy

interface EntityTypeVersion {

version: number;

entityTypeName: string;

changes: SchemaChange[];

compatibleWith: number[];

deprecatedIn?: number;

migrations: Migration[];

createdAt: Date;

}

interface SchemaChange {

type: 'ADD_PROPERTY' | 'REMOVE_PROPERTY' | 'MODIFY_PROPERTY' | 'ADD_OPERATION';

propertyName?: string;

oldType?: string;

newType?: string;

defaultValue?: any;

migrationRequired: boolean;

}

interface Migration {

fromVersion: number;

toVersion: number;

transform: (entity: any) => any;

reversible: boolean;

}

Backward Compatibility Patterns

Additive Changes: New properties should be optional with sensible defaults:

// Safe evolution - adding optional property

let mut vehicle_type_v2 = vehicle_type_v1.clone();

vehicle_type_v2.add_property_type(PropertyType::new(

"fuel_efficiency",

"Float",

false // not required

));

vehicle_type_v2.version = 2;

Property Type Changes: Handle type evolution gracefully:

class PropertyMigration {

static migrateStringToEnum(oldValue: string, enumValues: string[]): string {

// Attempt intelligent mapping

const lowercaseValue = oldValue.toLowerCase();

const match = enumValues.find(val =>

val.toLowerCase().includes(lowercaseValue) ||

lowercaseValue.includes(val.toLowerCase())

);

return match || enumValues[0]; // fallback to first enum value

}

}

Multi-Version Support: Systems should support multiple schema versions simultaneously:

class EntityStore

def save_entity(entity, force_version: nil)

target_version = force_version || @current_schema_version

if entity.schema_version != target_version

migrated_entity = migrate_entity(entity, target_version)

store_with_version(migrated_entity, target_version)

else

store_with_version(entity, entity.schema_version)

end

end

private def migrate_entity(entity, target_version)

current_version = entity.schema_version

while current_version < target_version

migration = find_migration(current_version, current_version + 1)

entity = migration.transform(entity)

current_version += 1

end

entity.schema_version = target_version

entity

end

end

Deployment Strategies

Blue-Green Schema Deployment: Deploy new schemas alongside existing ones, gradually migrating entities:

- Deploy new schema version to “green” environment

- Run both old and new versions in parallel

- Migrate entities in batches with rollback capability

- Switch traffic to new version

- Decommission old version after validation period

Feature Flags for Schema Changes: Control schema availability through configuration:

class SchemaFeatureFlags {

private flags: Map<string, boolean> = new Map();

enableSchemaVersion(entityType: string, version: number): void {

this.flags.set(`${entityType}_v${version}`, true);

}

isSchemaVersionEnabled(entityType: string, version: number): boolean {

return this.flags.get(`${entityType}_v${version}`) || false;

}

}

Performance Optimization Deep Dive

AOM systems face unique performance challenges due to their dynamic nature. However, careful optimization can achieve performance comparable to traditional systems while maintaining flexibility.

Caching Strategies

Entity Type Definition Caching: Cache compiled entity types to avoid repeated parsing:

use std::sync::{Arc, RwLock};

use std::collections::HashMap;

pub struct EntityTypeCache {

types: RwLock<HashMap<String, Arc<EntityType>>>,

compiled_operations: RwLock<HashMap<String, CompiledOperation>>,

}

impl EntityTypeCache {

pub fn get_or_compile(&self, type_name: &str) -> Arc<EntityType> {

// Try read lock first

{

let cache = self.types.read().unwrap();

if let Some(entity_type) = cache.get(type_name) {

return entity_type.clone();

}

}

// Compile with write lock

let mut cache = self.types.write().unwrap();

// Double-check pattern to avoid race conditions

if let Some(entity_type) = cache.get(type_name) {

return entity_type.clone();

}

let compiled_type = self.compile_entity_type(type_name);

let arc_type = Arc::new(compiled_type);

cache.insert(type_name.to_string(), arc_type.clone());

arc_type

}

}

Property Access Optimization: Use property maps with optimized access patterns:

class OptimizedEntity {

private propertyCache: Map<string, any> = new Map();

private accessCounts: Map<string, number> = new Map();

getProperty<T>(name: string): T | undefined {

// Track access patterns for optimization

this.accessCounts.set(name, (this.accessCounts.get(name) || 0) + 1);

// Check cache first

if (this.propertyCache.has(name)) {

return this.propertyCache.get(name);

}

// Load from storage and cache frequently accessed properties

const value = this.loadPropertyFromStorage(name);

if (this.accessCounts.get(name)! > 3) {

this.propertyCache.set(name, value);

}

return value;

}

}

Database Optimization

Strategic Indexing: Create indexes based on query patterns rather than all properties:

// MongoDB optimization for AOM queries

await db.collection('entities').createIndex({

'entityType': 1,

'properties.status': 1,

'updatedAt': -1

}, {

name: 'entity_status_time_idx',

partialFilterExpression: {

'properties.status': { $exists: true }

}

});

// Compound index for common query patterns

await db.collection('entities').createIndex({

'entityType': 1,

'properties.category': 1,

'properties.priority': 1

});

Query Optimization Patterns: Use aggregation pipelines for complex queries:

class OptimizedEntityStore {

async findEntitiesWithAggregation(criteria) {

return await this.collection.aggregate([

// Match stage - use indexes

{

$match: {

entityType: criteria.type,

'properties.status': { $in: criteria.statuses }

}

},

// Project only needed fields early

{

$project: {

_id: 1,

entityType: 1,

'properties.name': 1,

'properties.status': 1,

'properties.priority': 1

}

},

// Sort with index support

{

$sort: { 'properties.priority': -1, _id: 1 }

},

// Limit results early

{ $limit: criteria.limit || 100 }

]).toArray();

}

}

Connection Pooling and Read Replicas: Optimize database connections for high-throughput scenarios:

class DatabaseManager {

private writePool: ConnectionPool;

private readPools: ConnectionPool[];

async saveEntity(entity: Entity): Promise<void> {

// Use write connection for mutations

const connection = await this.writePool.getConnection();

try {

await connection.save(entity);

} finally {

this.writePool.releaseConnection(connection);

}

}

async findEntities(query: any): Promise<Entity[]> {

// Use read replicas for queries

const readPool = this.selectOptimalReadPool();

const connection = await readPool.getConnection();

try {

return await connection.find(query);

} finally {

readPool.releaseConnection(connection);

}

}

}

Memory Management

Lazy Loading: Load entity properties on demand:

class LazyEntity

def initialize(entity_type, id)

@entity_type = entity_type

@id = id

@loaded_properties = {}

@all_loaded = false

end

def method_missing(method_name, *args)

property_name = method_name.to_s

if @entity_type.has_property?(property_name)

load_property(property_name) unless @loaded_properties.key?(property_name)

@loaded_properties[property_name]

else

super

end

end

private def load_property(property_name)

# Load single property from database

value = Database.load_property(@id, property_name)

@loaded_properties[property_name] = value

end

end

Weak References for Caches: Prevent memory leaks in entity caches:

use std::sync::Weak;

use std::collections::HashMap;

pub struct WeakEntityCache {

entities: HashMap<String, Weak<Entity>>,

}

impl WeakEntityCache {

pub fn get(&mut self, id: &str) -> Option<Arc<Entity>> {

// Clean up dead references periodically

if let Some(weak_ref) = self.entities.get(id) {

if let Some(entity) = weak_ref.upgrade() {

return Some(entity);

} else {

self.entities.remove(id);

}

}

None

}

pub fn insert(&mut self, id: String, entity: Arc<Entity>) {

self.entities.insert(id, Arc::downgrade(&entity));

}

}

Security and Validation Framework

Security in AOM systems is critical due to the dynamic nature of schema and operations. Traditional security models must be extended to handle runtime modifications safely.

Authorization Framework

Schema Modification Permissions: Control who can modify entity types:

interface SchemaPermission {

principal: string; // user or role

entityType: string;

actions: SchemaAction[];

conditions?: PermissionCondition[];

}

enum SchemaAction {

CREATE_TYPE = 'CREATE_TYPE',

MODIFY_TYPE = 'MODIFY_TYPE',

DELETE_TYPE = 'DELETE_TYPE',

ADD_PROPERTY = 'ADD_PROPERTY',

REMOVE_PROPERTY = 'REMOVE_PROPERTY',

ADD_OPERATION = 'ADD_OPERATION'

}

class SchemaAuthorizationService {

checkPermission(

principal: string,

action: SchemaAction,

entityType: string

): boolean {

const permissions = this.getPermissions(principal);

return permissions.some(permission =>

permission.entityType === entityType &&

permission.actions.includes(action) &&

this.evaluateConditions(permission.conditions)

);

}

}

Property-Level Access Control: Fine-grained access control for sensitive properties:

use serde::{Serialize, Deserialize};

#[derive(Debug, Clone, Serialize, Deserialize)]

pub struct PropertyAccess {

pub property_name: String,

pub read_roles: Vec<String>,

pub write_roles: Vec<String>,

pub sensitive: bool,

}

impl Entity {

pub fn get_property_secure(&self, name: &str, user_roles: &[String]) -> Result<Option<&PropertyValue>, SecurityError> {

let access = self.entity_type.get_property_access(name)

.ok_or(SecurityError::PropertyNotFound)?;

if !access.read_roles.iter().any(|role| user_roles.contains(role)) {

return Err(SecurityError::InsufficientPermissions);

}

if access.sensitive {

self.audit_property_access(name, user_roles);

}

Ok(self.properties.get(name).map(|p| &p.value))

}

}

Input Validation and Sanitization

Dynamic Property Validation: Validate properties based on runtime type definitions:

class PropertyValidator {

static validate(

property: Property,

propertyType: PropertyType,

context: ValidationContext

): ValidationResult {

const errors: string[] = [];

// Type validation

if (!this.isValidType(property.value, propertyType.valueType)) {

errors.push(`Invalid type for ${propertyType.name}`);

}

// Custom validation rules

if (propertyType.validator) {

try {

const isValid = propertyType.validator(property.value, context);

if (!isValid) {

errors.push(`Custom validation failed for ${propertyType.name}`);

}

} catch (error) {

errors.push(`Validation error: ${error.message}`);

}

}

// Sanitization for string properties

if (typeof property.value === 'string') {

property.value = this.sanitizeString(property.value);

}

return {

valid: errors.length === 0,

errors,

sanitizedValue: property.value

};

}

private static sanitizeString(input: string): string {

// Remove potentially dangerous content

return input

.replace(/<script\b[^<]*(?:(?!<\/script>)<[^<]*)*<\/script>/gi, '')

.replace(/javascript:/gi, '')

.replace(/on\w+\s*=/gi, '');

}

}

Business Rule Enforcement: Implement complex validation rules across entities:

class BusinessRuleEngine

def initialize

@rules = {}

end

def add_rule(entity_type, rule_name, &block)

@rules[entity_type] ||= {}

@rules[entity_type][rule_name] = block

end

def validate_entity(entity)

errors = []

if rules = @rules[entity.entity_type.name]

rules.each do |rule_name, rule_block|

begin

result = rule_block.call(entity)

unless result.valid?

errors.concat(result.errors.map { |e| "#{rule_name}: #{e}" })

end

rescue => e

errors << "Rule #{rule_name} failed: #{e.message}"

end

end

end

ValidationResult.new(errors.empty?, errors)

end

end

# Usage example

rule_engine = BusinessRuleEngine.new

rule_engine.add_rule('Vehicle', 'valid_year') do |entity|

year = entity.get_property('year')

if year && (year < 1900 || year > Date.current.year + 1)

ValidationResult.new(false, ['Year must be between 1900 and next year'])

else

ValidationResult.new(true, [])

end

end

Operation Security

Safe Operation Binding: Ensure operations cannot execute arbitrary code:

class SecureOperationBinder {

private allowedOperations: Set<string> = new Set();

private operationSandbox: OperationSandbox;

constructor() {

// Whitelist of safe operations

this.allowedOperations.add('calculate');

this.allowedOperations.add('format');

this.allowedOperations.add('validate');

this.operationSandbox = new OperationSandbox({

allowedGlobals: ['Math', 'Date'],

timeoutMs: 5000,

memoryLimitMB: 10

});

}

bindOperation(name: string, code: string): Operation {

if (!this.allowedOperations.has(name)) {

throw new Error(`Operation ${name} not in whitelist`);

}

// Static analysis for dangerous patterns

if (this.containsDangerousPatterns(code)) {

throw new Error('Operation contains dangerous patterns');

}

return this.operationSandbox.compile(code);

}

private containsDangerousPatterns(code: string): boolean {

const dangerousPatterns = [

/eval\s*\(/,

/Function\s*\(/,

/require\s*\(/,

/import\s+/,

/process\./,

/global\./,

/window\./

];

return dangerousPatterns.some(pattern => pattern.test(code));

}

}

Anti-patterns and Common Pitfalls

Learning from failures is crucial for successful AOM implementations. Here are the most common anti-patterns and how to avoid them.

1. Over-Engineering Stable Domains

Anti-pattern: Applying AOM to domains that rarely change

// DON'T: Using AOM for basic user authentication

const userType = new EntityType('User');

userType.addProperty('username', 'string');

userType.addProperty('passwordHash', 'string');

userType.addProperty('email', 'string');

// Better: Use traditional class for stable domain

class User {

constructor(

public username: string,

public passwordHash: string,

public email: string

) {}

}

When to avoid AOM:

- Core business entities that haven’t changed in years

- Performance-critical code paths

- Simple CRUD operations

- Well-established domain models

2. Performance Neglect

Anti-pattern: Ignoring performance implications of dynamic queries

// DON'T: Loading all entity properties for simple operations

async function getEntityName(id) {

const entity = await entityStore.loadFullEntity(id); // Loads everything

return entity.getProperty('name');

}

// Better: Load only needed properties

async function getEntityName(id) {

return await entityStore.loadProperty(id, 'name');

}

Performance Guidelines:

- Monitor query performance continuously

- Use database profiling tools

- Implement property-level lazy loading

- Cache frequently accessed entity types

3. Type Explosion

Anti-pattern: Creating too many similar entity types instead of using properties

// DON'T: Creating separate types for minor variations

const sedanType = new EntityType('Sedan');

const suvType = new EntityType('SUV');

const truckType = new EntityType('Truck');

// Better: Use discriminator properties

const vehicleType = new EntityType('Vehicle');

vehicleType.addProperty('bodyType', 'enum', {

values: ['sedan', 'suv', 'truck']

});

Type Design Guidelines:

- Prefer composition over type proliferation

- Use enums and discriminator fields

- Consider type hierarchies carefully

- Regular type audits to identify similar types

4. Missing Business Constraints

Anti-pattern: Focusing on technical flexibility while ignoring business rules

# DON'T: Allowing any combination of properties

vehicle = registry.create_entity('Vehicle',

maker: 'Tesla',

fuel_type: 'gasoline', # This makes no sense!

electric: true

)

# Better: Implement cross-property validation

class VehicleValidator

def validate(entity)

if entity.electric? && entity.fuel_type != 'electric'

raise ValidationError, "Electric vehicles cannot have gasoline fuel type"

end

end

end

Constraint Guidelines:

- Define business rules explicitly

- Implement cross-property validation

- Use state machines for complex business logic

- Regular business rule audits

5. Cache Invalidation Problems

Anti-pattern: Inconsistent cache invalidation strategies

// DON'T: Forgetting to invalidate dependent caches

impl EntityStore {

fn update_entity_type(&mut self, entity_type: EntityType) {

self.entity_types.insert(entity_type.name.clone(), entity_type);

// Forgot to invalidate entity instances cache!

}

}

// Better: Comprehensive invalidation strategy

impl EntityStore {

fn update_entity_type(&mut self, entity_type: EntityType) {

let type_name = entity_type.name.clone();

// Update type cache

self.entity_types.insert(type_name.clone(), entity_type);

// Invalidate all dependent caches

self.entity_cache.invalidate_by_type(&type_name);

self.query_cache.invalidate_by_type(&type_name);

self.compiled_operations.remove(&type_name);

// Notify cache invalidation to other systems

self.event_bus.publish(CacheInvalidationEvent::new(type_name));

}

}

6. Inadequate Error Handling

Anti-pattern: Generic error messages that don’t help debugging

// DON'T: Vague error messages

throw new Error('Property validation failed');

// Better: Detailed, actionable error messages

throw new PropertyValidationError({

entityType: 'Vehicle',

entityId: 'vehicle_123',

property: 'year',

value: 1850,

constraint: 'must be between 1900 and 2025',

suggestedFix: 'Check data source for year property'

});

7. Security Oversights

Anti-pattern: Treating dynamic properties like static ones for security

# DON'T: No access control on dynamic properties

def get_property(entity_id, property_name):

entity = load_entity(entity_id)

return entity.get_property(property_name) # No security check!

# Better: Property-level security

def get_property(entity_id, property_name, user_context):

entity = load_entity(entity_id)

if not has_property_access(user_context, entity.type, property_name):

raise SecurityError(f"Access denied to property {property_name}")

if is_sensitive_property(entity.type, property_name):

audit_log.record_access(user_context, entity_id, property_name)

return entity.get_property(property_name)

8. Testing Gaps

Anti-pattern: Only testing the happy path with AOM systems

// DON'T: Only test valid configurations

test('creates vehicle entity', () => {

const vehicle = factory.createEntity('Vehicle', {

maker: 'Toyota',

model: 'Camry'

});

expect(vehicle.maker).toBe('Toyota');

});

// Better: Test edge cases and error conditions

describe('Vehicle Entity', () => {

test('rejects invalid property types', () => {

expect(() => {

factory.createEntity('Vehicle', {

maker: 123, // Should be string

model: 'Camry'

});

}).toThrow('Invalid property type');

});

test('handles missing required properties', () => {

expect(() => {

factory.createEntity('Vehicle', {

model: 'Camry' // Missing required 'maker'

});

}).toThrow('Required property missing: maker');

});

});

Prevention Strategies

- Regular Architecture Reviews: Schedule periodic reviews of entity type proliferation and usage patterns.

- Performance Monitoring: Implement continuous monitoring of query performance and cache hit rates.

- Security Audits: Regular audits of property access patterns and operation bindings.

- Automated Testing: Comprehensive test suites covering edge cases and error conditions.

- Documentation Standards: Maintain clear documentation of business rules and constraints.

Practical Implementation

To demonstrate these concepts in practice, I’ve created a sample project with working implementations in all three languages discussed: AOM Sample Project.

The repository includes:

- Rust implementation (

cargo run) – Type-safe AOM with memory safety - TypeScript implementation (

npx ts-node app.ts) – Gradual typing with modern JavaScript features - Ruby implementation (

ruby app.rb) – Metaprogramming-powered flexibility

Conclusion

The Adaptive Object Model pattern continues to evolve with modern programming languages and database technologies. While the core concepts remain the same, implementation approaches have been refined to take advantage of:

- Type safety in languages like Rust and TypeScript

- Better performance through caching and optimized data structures

- Improved developer experience with modern tooling and language features

- Scalable persistence using document databases and modern storage patterns

The combination of dynamic languages with flexible type systems and schema-less databases provides a powerful foundation for building adaptable systems. From my consulting experience implementing AOM on large projects, I’ve seen mixed results that highlight important considerations. The pattern’s flexibility is both its greatest strength and potential weakness. Without proper architectural discipline, teams can easily create overly complex systems with inconsistent entity types and validation rules. The dynamic nature that makes AOM powerful also requires more sophisticated debugging skills and comprehensive testing strategies than traditional static systems. In my early implementations using relational databases, we suffered from performance issues due to the excessive joins required to reconstruct entities from the normalized AOM tables. This was before NoSQL and document-oriented databases became mainstream. Modern document databases have fundamentally changed the viability equation by storing AOM entities naturally without the join penalties that plagued earlier implementations.

The practical implementations available at https://github.com/bhatti/aom-sample demonstrate that AOM is not just theoretical but a viable architectural approach for real-world systems. By studying these examples and adapting them to your specific domain requirements, you can build systems that gracefully evolve with changing business needs.